We have updated Neural Network Console Windows today. We would like to introduce new functionalities and their usages in this post.

・Error (misclassification) analysis function, Sorting inference results

・Output html report

・other functionalities / improvements

1. Error (misclassification) analysis function & sorting inference results

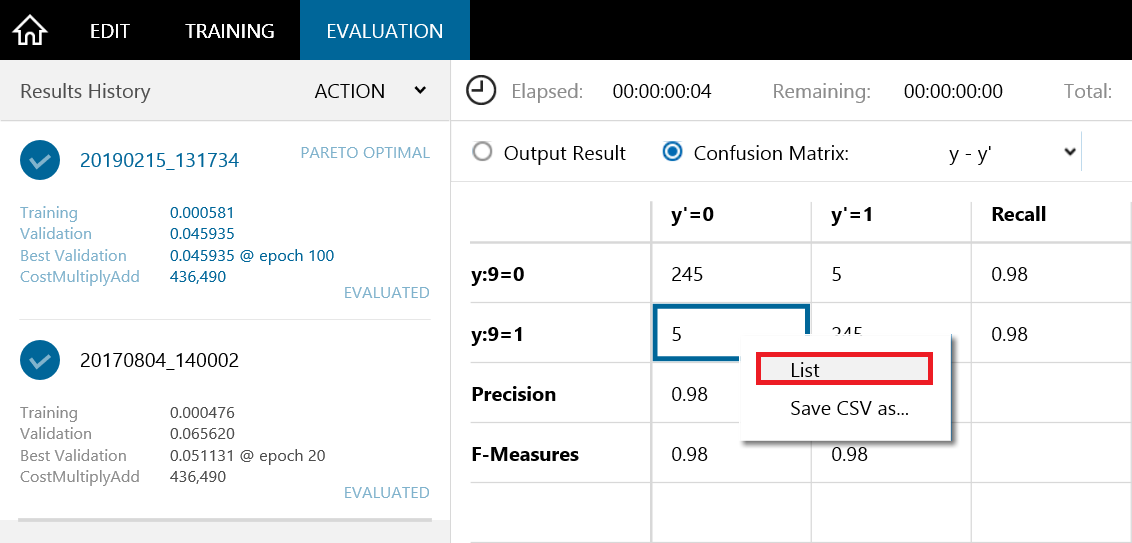

Users can display the misclassified data from the confusion matrix of the inference results of binary or multi-way classification problems. To do so, double-click the corresponding cell on confusion matrix, or select List from the right-click menu.

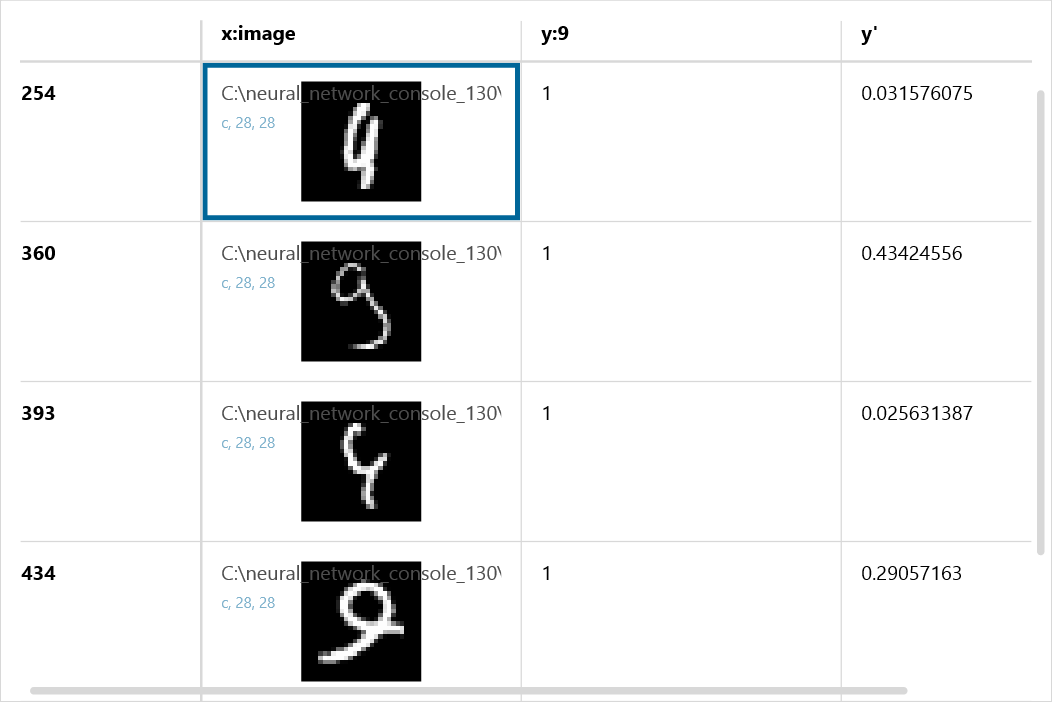

Below is an example showing the data that misclassified the hand-written “9” as “4” in the sample project 02_binary_cnn.

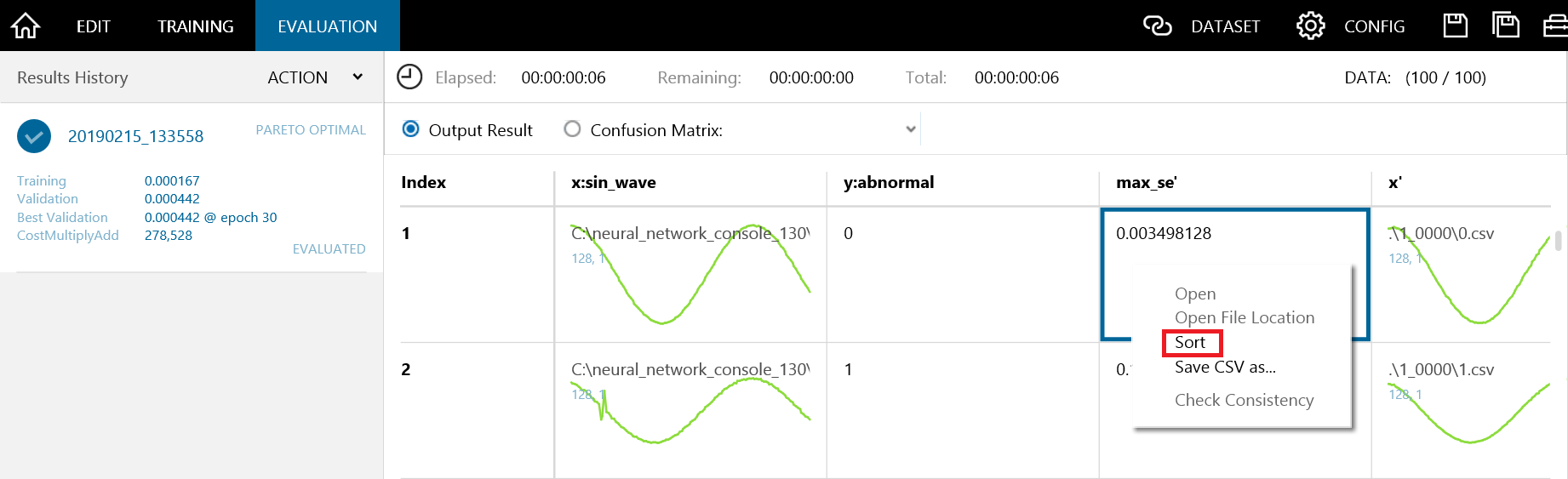

In numerical prediction problems where confusion matrix cannot be applied, users can sort and display the entire dataset based on the inference results.

To sort the data, double-click the row to be sorted in Output Result, or select Sort from the right-click menu.

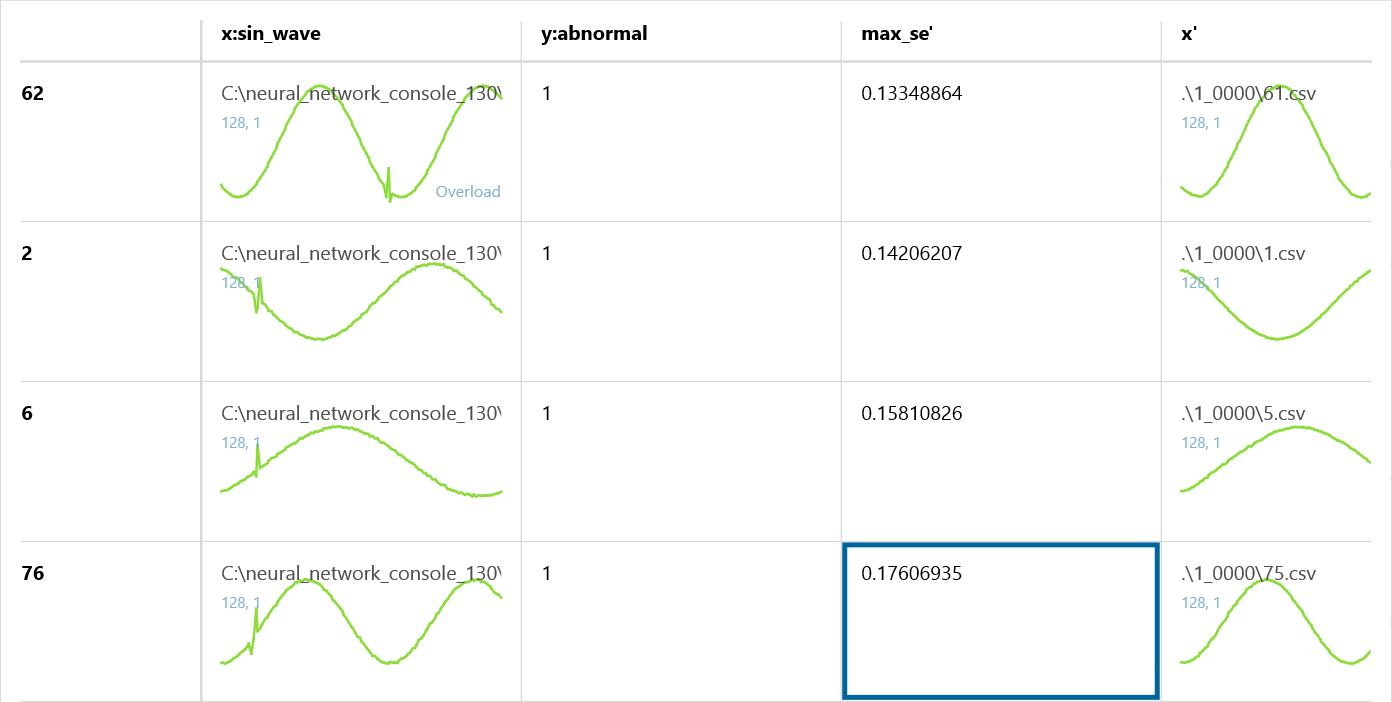

Below is an example displaying the sorted anomaly data in anomaly detection sample project (\samples\sample_project\tutorial\anomaly_detection\sin_wave_anomaly_detection).

Using these functionalities, inference results can be analyzed more quickly and easily.

2. Output html report (beta)

On top of outputting pptx report that was added in Version 1.20, Neural Network Console can now output the reports on experiment results as html format. This functionality can be helpful not only for preserving the experiments, but also for more efficient sharing of experiment results.

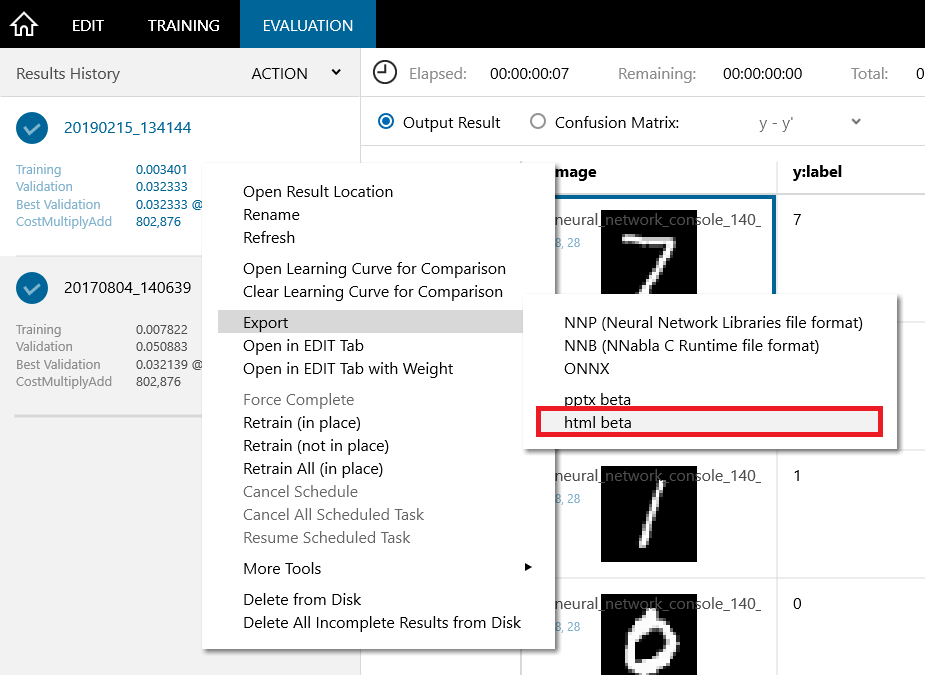

To output the results as html, right-click on the training results on TRAINING or EVALUATION tab, and select Export, html beta on the menu.

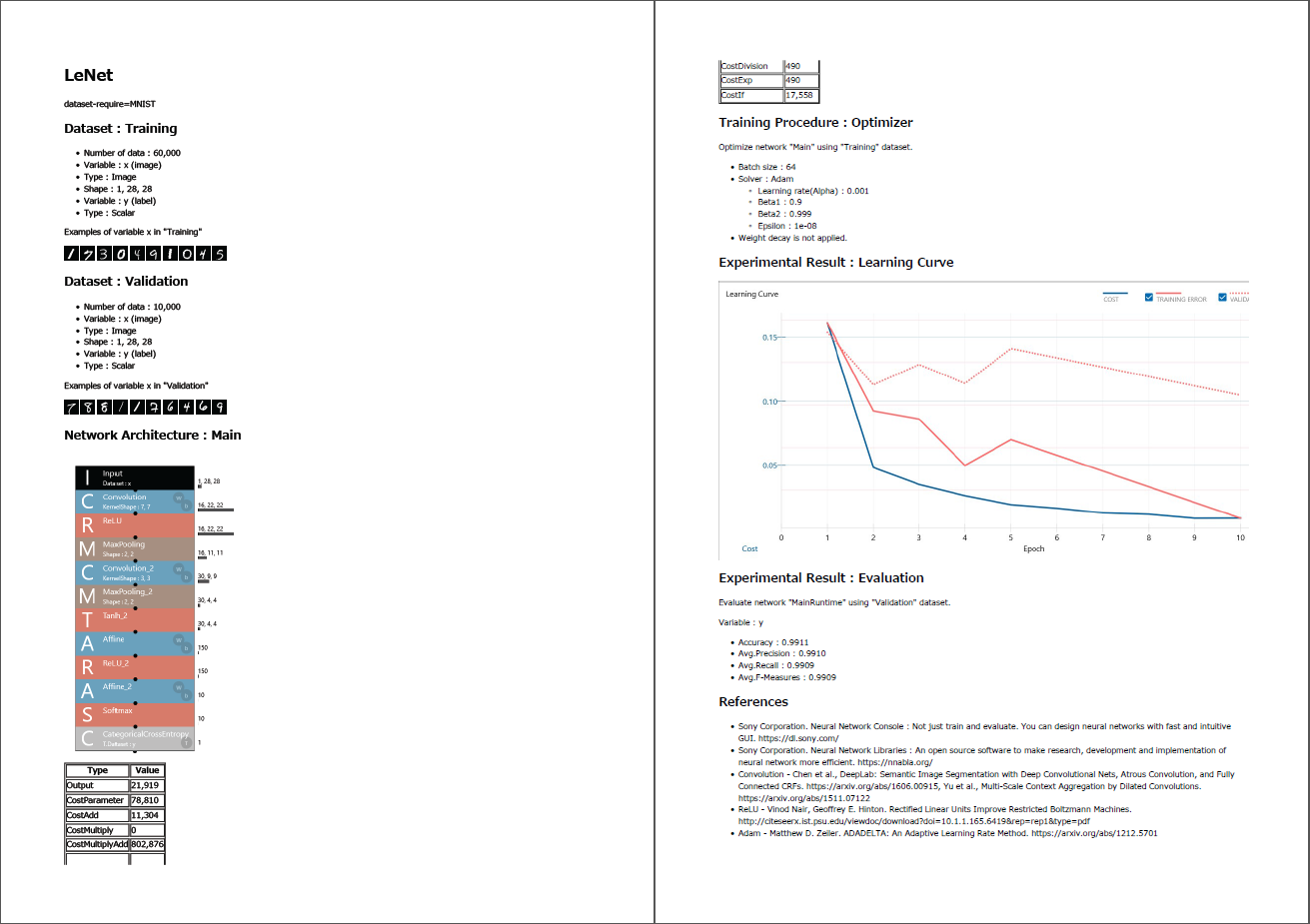

Below is an example of an html report output of LeNet sample project.

By using output html report functionality, dataset, network architecture, training settings, test results, and even references used in the experiment can be organized in a single html file. The contents in the html file can be easily copy-and-pasted to word files or blogs, etc.

3. Other functionalities & improvements

We have also implemented the following functionalities and improvements.

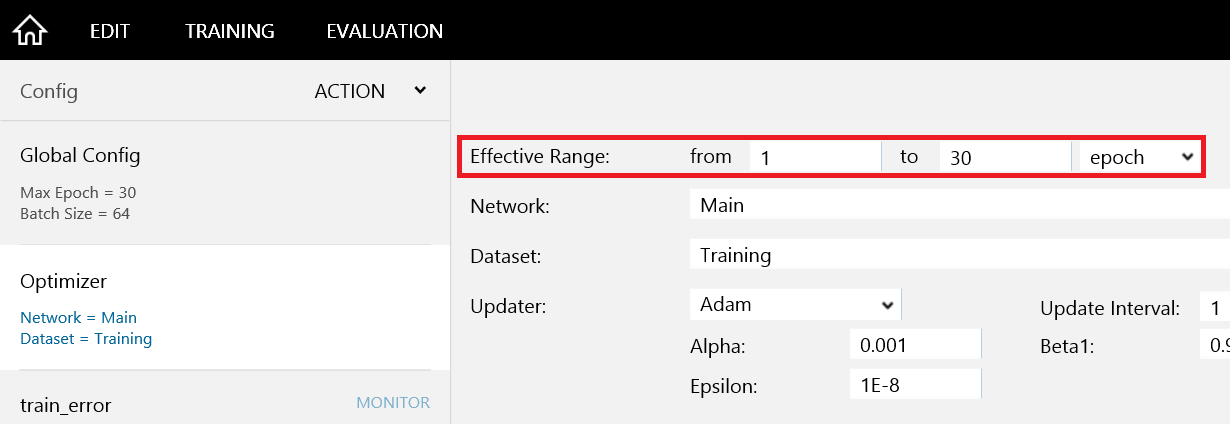

・Addition of Range setting in Optimizer

Users can now set the range of optimizer being effective (in iterations or epochs). By using multiple optimizers, it is possible to change the network, dataset, hyper-parameters even in the middle of training.

・Addition of new layers, Warmup Scheduler

4 types of layers, and the warmup option for learning rate scheduler, which have been introduced in the recent version of Neural Network Libraries, have also been added.

・New sample Project

Various sample projects have been added, where users can easily download the datasets and perform training and inference.

Waveform anomaly detection with artificial data.

samples\sample_project\tutorial\anomaly_detection

Description of the trained neural networks, including feature weight training, normalization, attention, and visualization of each layer’s recoginzation results.

samples\sample_project\tutorial\explainable_dl

Training with Mixup

samples\sample_project\image_recognition\CIFAR10\resnet\resnet-110-mixup.sdcproj

Weakly-supervised training with DeepMIL that can localize object positions by training with labels only samples\sample_project\image_recognition\CIFAR10\resnet\resnet-110-deepmil.sdcproj

We will continue to update sample projects

We will also continue to improve Neural Network Console. We look forward to hearing feedbacks from the users for further improvements!

Neural Network Console Windows

https://dl.sony.com/ja/app/

mixup: Beyond Empirical Risk Minimization Hongyi Zhang, Moustapha Cisse, Yann N. Dauphin, David Lopez-Paz

https://arxiv.org/abs/1710.09412

Is object localization for free? – Weakly-supervised learning with convolutional neural networks.

M. Oquab, L. Bottou, I. Laptev, and J. Sivic.

In CVPR, pages 685-694, 2015.